Why I vibe-code but don't vibe-write

no rlvr on information transfer

[day 2/5]

I am a shameless vibecoder. I have not written a single line since november.

I stand by this: the models are simply great now, opus 4.5 updated me on the fact that programming from now on means wrangling claude code until the end times. I see no reason not to.

I vibe-code for research because I don’t actually care what the model spits out. The pytorch code need not be intelligible, as long as it does what I want it to.1

Show me the incentive and I’ll show you the outcome. RLVR has given us very cracked coding models. Anthropic’s mission is literally making the best coding model. No company pouring billions into getting really good at writing compact, coherent blogposts that are efficient at information transfer. This is a really tricky thing to optimize for. It is not at all obvious what makes humans get something in a tutor setting. This is why models still get mogged by good teaching assistants. I would guess that the widespread LLM-as-a-tutor usage is mostly availability, not quality.2

I’ve heard through the grapevine that AWS managers have found a brand new way to bully their poor underlings by asking: “what have you done today that you cannot do babying claude code”. Actually naming a thing you can’t do babying claude code is left as an exercise to the reader.3

Anyway, my contention today lies not with middle manager marvin. It’s vibe-writing.

People have ideas: messy, sloppy, disgusting ideas. Writing blogposts is mainly an exercise in unconfusing oneself. But what is easier is opening claude.ai, having a chat about your half-baked, barely coherent idea (because the more coherent it is in your mind, the easier it would be to do the writing yourself) where they don’t even really realize themselves what they want to say.

But claude doesn’t care, he’s a very good boy, and will write you a blogpost despite all of this.

Sadly this currently just results in bad posts. A time will come when this isn’t true, but for now, they are generally unpleasant to read, feel completely oblivious to what sentences are actually load bearing for information transfer and the model will insert its own hidden implications and arguments. The whole thing ends up feeling like an undergrad trying to reach the word limit.

And worst of all, you will miss out on improving at one of the most generalizable skills ever!

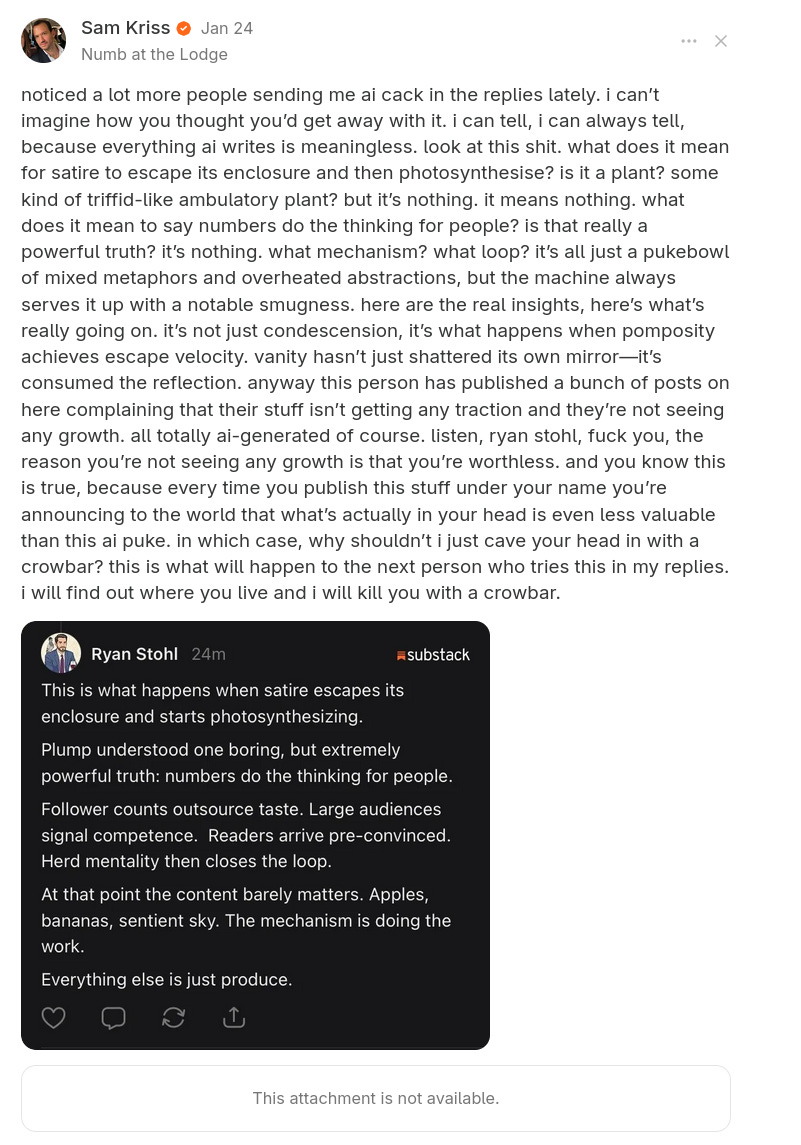

or as mister Kriss so eloquently put it:

In addition to the slop factor4, its also just a violation of social contract. When I read an article I am operating under the assumption that another mind is trying to transfer information to me, and that if I read the sentences enough, there will be something brain-grokkable underneath. Finding out that these premises don’t hold and it was the shoggoth speaking can be pretty annoying.

I think there is a pretty strong case that writing is a skill worth preserving. It generalizes to conversation, and, well, convincing someone of something is probably valuable until the end times.5

Also, I just like the process.

At best I maybe want to iterate on it, (the iterator being claude here). I really don’t fucking care if it’s ugly or 1000+ more lines than it needs to be, this is research!!! Also obviously actually do check your code and use agents for code reviews if the code is causing you to epistemicly update on something

They happen to be emergently OK at the task due to assistant post-training, and im sure the labs do try to optimize for this somewhat

I can’t anymore, unless one imposes constraints of having actual readable code

note i do think there will come a time very soon where models will write better blogposts than i ever could by the way.

But hey, what do I know, lydia says this is a mere “system prompt issue“

The hilarious part about these generated texts is that they have so wonderfully and unwillingly imitated what it’s like to speak with an incompetent middle manager at a megacorp.

America’s conference rooms have been full of these empty metaphors and long winded monologues that everyone nods along to and pretends to comprehend. In reality they’ve been thinking about where they’re going to eat lunch for the last 1000 human generated tokens.

I also think aesthetically it is just a shame to use LLMs for writing. I saw Nick Cave make this point in an open letter; the way I'd phrase it is the conjecture that by using LLMs in this way your writing style becomes a weighted average of the writing styles in the training data (though I guess it depends on the prompt which data it relies on). We lose the aesthetic benefit of different writing styles and possibly the benefit of collectively innovating and learning from each other about the way we write.